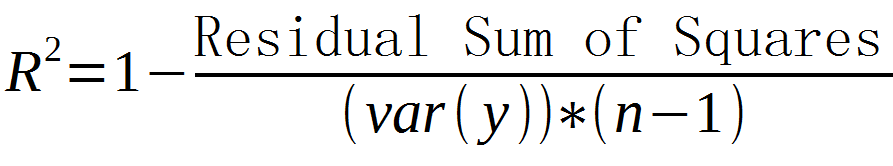

Whether to calculate the intercept for this model. LinearRegression fits a linear model with coefficients w (w1,, wp) to minimize the residual sum of squares between the observed targets in the dataset, and the targets predicted by the linear approximation. Sums of squares appear in many contexts if were talking about residuals. For a proof of this in the multivariate ordinary least squares (OLS) case, see partitioning in the general OLS model. Ordinary least squares Linear Regression. Obviously the sum of squared residuals is a special case of a sum of squares. In general, total sum of squares = explained sum of squares + residual sum of squares. It is used as an optimality criterion in parameter selection and model selection. It is otherwise called as residual sum of squares(RSS). A general discussion is given of the approximate distribution of the residual sum of squares in a linear model in which a weighted analysis is made with. A small RSS indicates a tight fit of the model to the data. In statistics, Minimum Residual sum is the measurement of difference between data and an estimation model. It is a measure of the discrepancy between the data and an estimation model. In statistics, the residual sum of squares (RSS), also known as the sum of squared residuals (SSR) or the sum of squared errors of prediction (SSE), is the sum of the squares of residuals (deviations of predicted from actual empirical values of data).See: Square Operation, Errors and Residuals in Statistics, Optimality Criterion, Model Selection.It can be a component of a Total Sum of Squares (when summed with an explained sum of squares).Excel then calculates the total sum of squares, sstotal. In statistics, the residual sum of squares (RSS), also known as the sum of squared residuals (SSR) or the sum of squared errors of prediction (SSE), is the sum. AKA: SSR, Residual Sum of Squares (RSS). The sum of these squared differences is called the residual sum of squares, ssresid. In statistics, the residual sum of squares (RSS), also known as the sum of squared residuals (SSR) or the sum of squared errors of prediction (SSE), is the sum of the squares of residuals (deviations of predicted from actual empirical values of data).Generally, a lower residual sum of squares indicates that the regression model can better explain the data, while a higher residual sum. In other words, it depicts how the variation in the dependent variable in a regression model cannot be explained by the model. A Sum of Squared Errors (SSE) Measure is a sum of squares that. The residual sum of squares essentially measures the variation of modeling errors.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed